A multilingual AI chatbot running entirely on the client’s servers. No data ever leaves the infrastructure and no external AI APIs are used.

Project overview

A FinTech organization in EU needed a conversational assistant for their web platform. The assistant had to answer user questions in multiple languages based on two data sources: a daily feed of structured records from an external API and a library of policy documents.

The primary constraint was data privacy: all processing had to stay on-site and the system had to function within limited hardware resources. The solution was built using a RAG (Retrieval-Augmented Generation) architecture.

Client: Public-sector organization in EU

Timeline: 8 weeks

Team: 2 developers, QA and PM

Status: In production

The challenge

Four constraints shaped our technical decisions:

- Data privacy.

User profiles, conversation history and source documents could not leave the client’s servers. This ruled out hosted AI APIs entirely. - Hardware budget.

The system had to run on limited GPU resources (6GB VRAM budget), limiting the size of the models used. - Languages.

The assistant had to handle many languages – switching naturally based on user input. - Live data.

The assistant needed to work with daily updated records rather than static information.

Solution architecture: four layers

| Layer | Task |

|---|---|

| Application layer | Handles routing, authentication and session management. |

| AI platform | Runs the language models locally without external network calls. |

| Vector database | Stores document representations and performs similarity searches to retrieve context. |

| Real-time transport | Streams AI responses to the frontend to keep the interface responsive. |

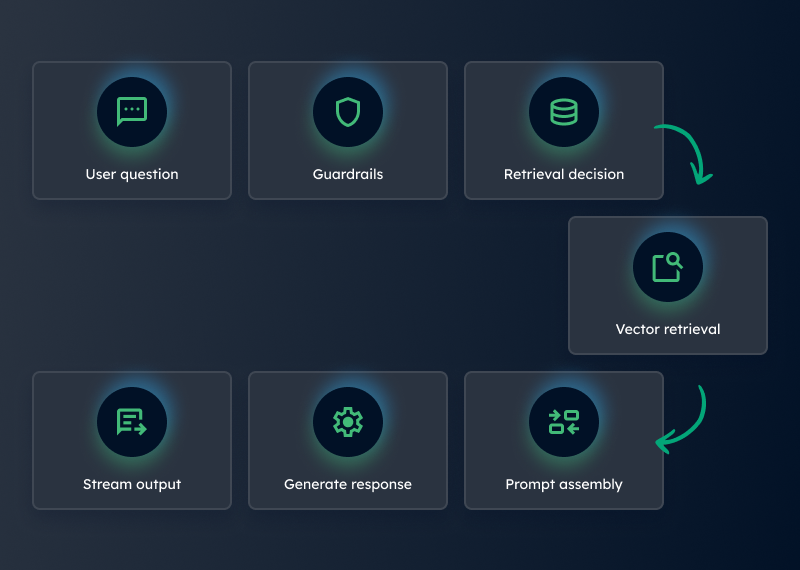

RAG pipeline flow

- 1. The user sends a message.

- 2. Guardrail layer validates the input for security.

- 3. The model determines retrieval needs: it uses specific tools to decide which data source to query.

- 4. Execution of the retrieval tool:

- The query is converted into a vector (embedded).

- The system performs a vector search to find relevant documents or records.

- 5. Prompt assembly: The retrieved context, user profile and chat history are combined into a single prompt.

- 6. Response generation: The model generates the answer based on that combined data.

- 7. Real-time streaming: The response is sent to the frontend immediately as it is generated.

Key technical challenges and how we solved them

Production AI on limited resources

Most high-end models require significant hardware. We selected a compact model with strong multilingual support and efficient performance on limited hardware. The RAG architecture compensates for the smaller model size by injecting specific context at query time.

Prompt injection defense

Smaller models can be more susceptible to user manipulation. We implemented a multi-layer defense, including vector-based guardrails that check queries against blacklists/whitelists before they reach the model and specialized prompt hardening techniques.

Context window management

To fit within data limits, every component was compressed. This included limiting conversation history, pre-summarizing documents at indexing time and stripping unnecessary fields from tool results.

Embedding quality and data processing

Source records varied in quality and length. We implemented automated parsing and filtering to clean data, used structured summarization before vectorization and manually segmented large documents by topic to ensure better retrieval accuracy.

What we built

- Document indexing.

Automated tools for loading and vectorizing policy files - Record sync pipeline.

Daily import and cleaning of external API data. - Retrieval tools.

Specialized search functions available to the AI agent. - Session management.

Systems to handle user profiles and chat history within strict constraints.

Tech stack

| Komponent | Technologia |

|---|---|

| Backend framework | Modern enterprise Symfony 8 framework |

| AI platform | Ollama – local model hosting environment (Bedrock as a an alternative) |

| Models | Multilingual language and embedding models |

| Database | PostgreSQL with pgvector – vector-enabled database solution |

| Communication | Server-Sent Events – real-time streaming and asynchronous message handling |

Results

- Efficient response times and daily synchronization of 20-30k records (1-6k updated daily) of records.

- Multilingual support. Shared vector space across languages. A user can ask a question in one language and get answers based on documents written in another – no translation layer needed.

- Total data sovereignty. Nothing leaves their infrastructure.

- Fixed, predictable infrastructure cost. No per-token API fees – the client pays only for server resources, known upfront.

This project demonstrates that a production-quality RAG assistant can run on limited, private hardware. Success depends on engineering within constraints: smart context management, robust security layers and efficient data pipelines.