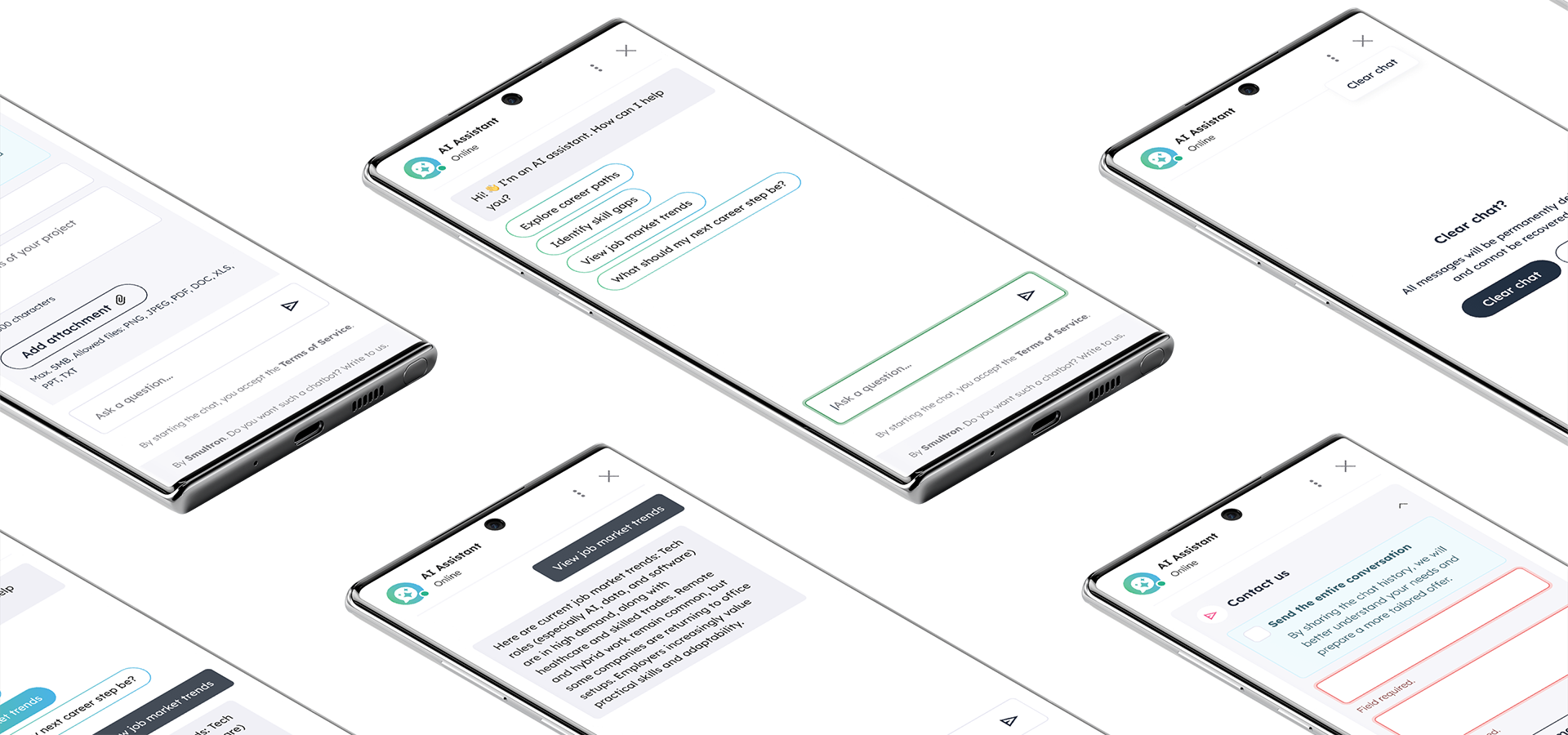

A multilingual AI chatbot running entirely on the client’s servers. No data ever leaves the infrastructure and no external AI APIs are used.

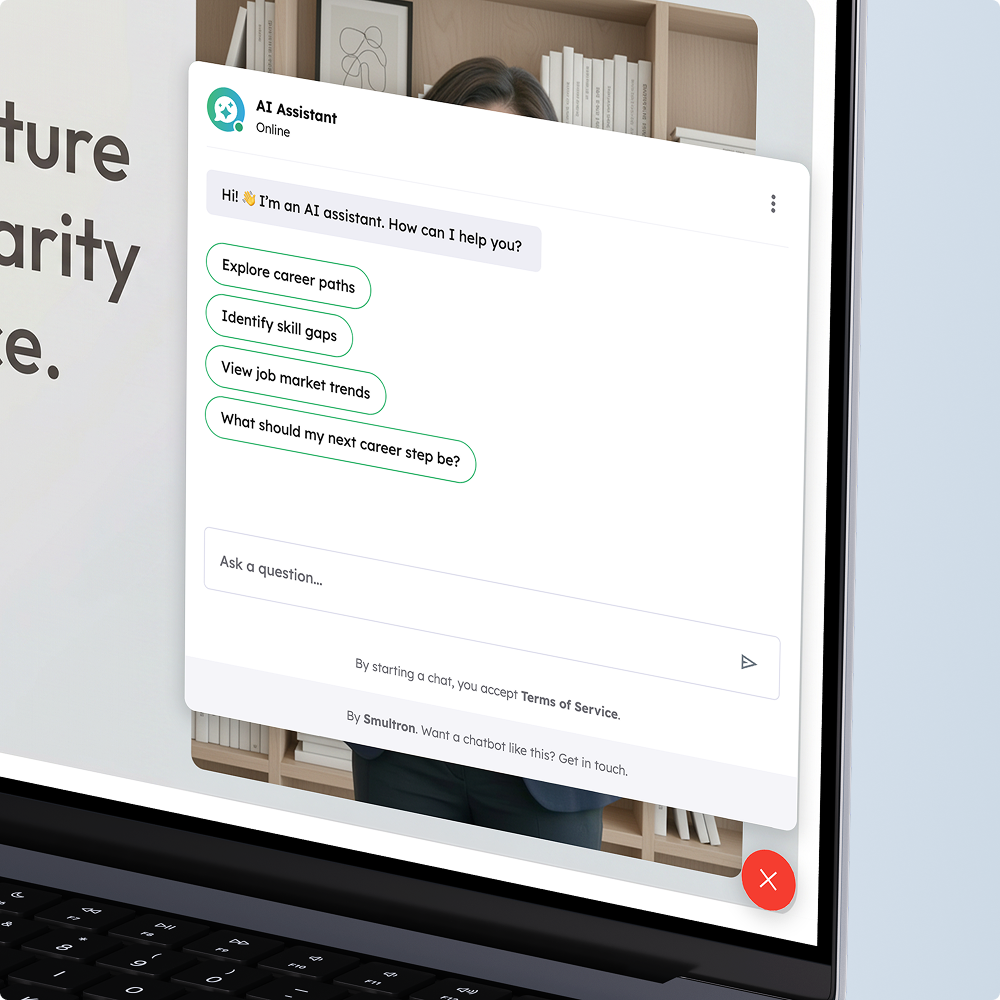

An international enterprise client required a conversational assistant supporting career planning and professional decision-making. The assistant had to answer user questions in multiple languages based on two data sources: a daily feed of structured records from an external API and a library of policy documents.

The assistant had to answer user questions in multiple languages based on two data sources: a daily feed of structured records from an external API and a library of policy documents.

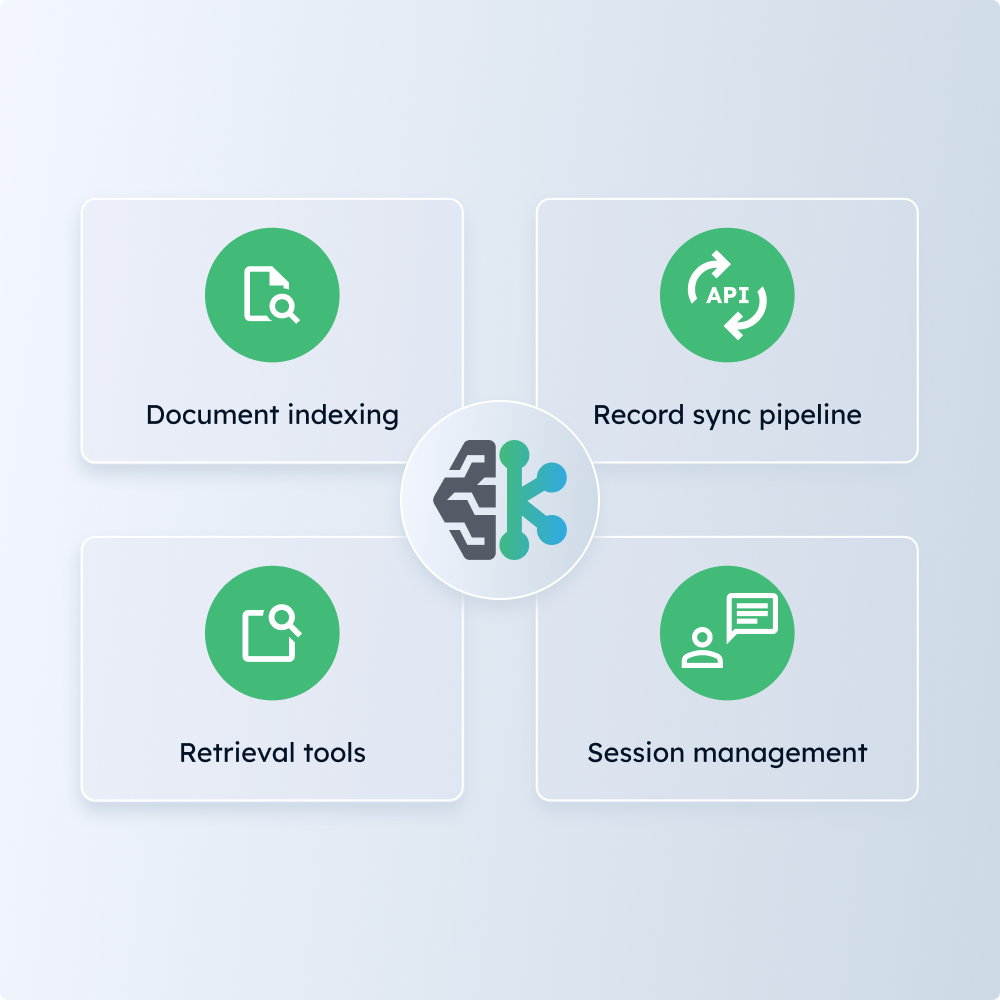

The primary constraint was data privacy: all processing had to stay on-site and the system had to function within limited hardware resources. The solution was built using a RAG (Retrieval-Augmented Generation) architecture.